|

each time the host (or the guest) is actively polling the CSB to check for more work.

Since I/O register accesses and interrupts are very expensive in the common case of hardware assisted virtualization, they are suppressed when not needed, i.e. MSI-X interrupts are used since they have less overhead than traditional PCI interrupts. Notifications in the other direction are implemented using KVM/bhyve interrupt injection mechanisms. On QEMU/bhyve, notifications from guest to host are implemented with accesses to I/O registers which cause a trap in the hypervisor. Two notification mechanisms needs to be supported by the hypervisor to allow guest and host netmap to wake up each other.

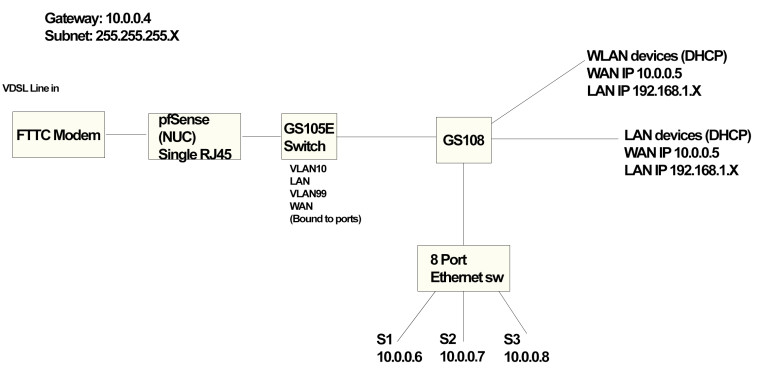

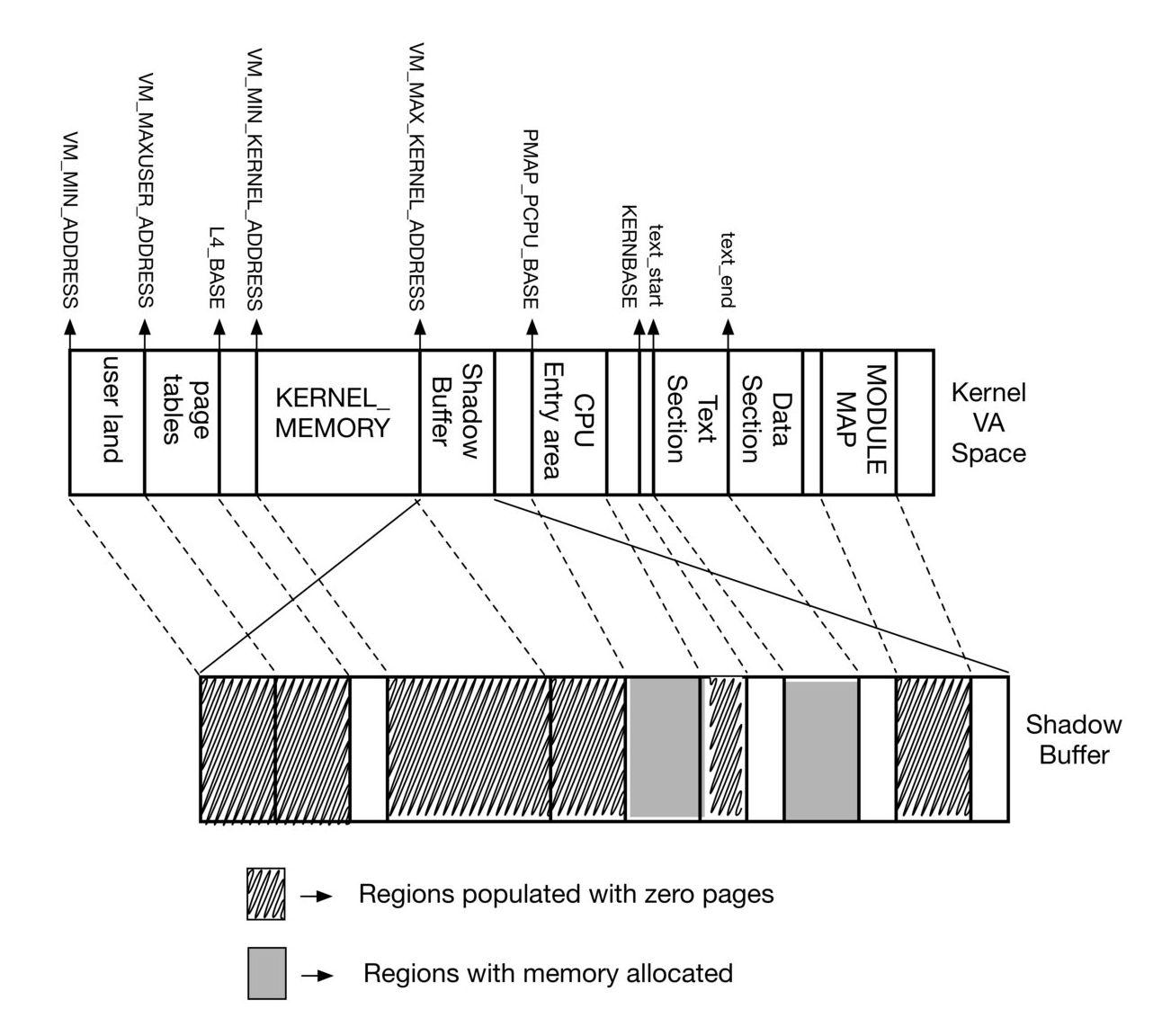

Similarly to VirtIO paravirtualization, synchronization between guest netmap (driver) and host netmap (kernel threads) happens through a shared memory area called Communication Status Block (CSB), which is used to store producer-consumer state and notification suppression flags. System calls issued by guest applications on ptnetmap ports are served by kernel threads (one per ring) running in the netmap host. With ptnetmap, a netmap port on the host can be exposed to the guest in a protected way, so that netmap applications in the guest can directly access the rings and packet buffers of the host port, avoiding all the extra overhead involved in the emulation of network devices. To overcome these limitations, ptnetmap has been introduced as a passthrough technique to completely avoid hypervisor processing in the packet datapath, unblocking the full potential of netmap also for virtual machine environments. As a consequence, the maximum packet rate between the two VMs is often limited by 2-5 Mpps. The emulation involves device-specific overhead - queue processing, format conversions, packet copies, address translations, etc. As a matter of facts, while netmap is fast on both the host (the VALE switch) and the guest (interaction between application and the emulated device), each packet still needs to be processed from the hypervisor, which needs to emulate the device model used in the guest (e.g. However, in a typical scenario with two communicating netmap applications running in different VMs (on the same host) connected through a VALE switch, the journey of a packet is still quite convoluted. The Virtual Ethernet (VALE) software switch, which supports scalable high performance local communication (over 20 Mpps between two switch ports), can then be used to connect together multiple VMs. virtio-net, Xen netfront/netback - allows netmap applications to run in the guest over fast paravirtualized I/O devices. Netmap support for various paravirtualized drivers - e.g. Several netmap extension have been developed to support virtualization. traditional socket API primarily comes from: (i) batching, since it is possible to send/receive hundreds of packets with a single system call, (ii) preallocation of packet buffers and memory mapping of those in the application address space. Rings are always accessed in the context of system calls and NIC interrups are used to notify applications about NIC processing completion. It exposes an hardware-independent API which allows userspace application to directly interact with NIC hardware rings, in order to receive and transmit Ethernet frames. Netmap is a framework for high performance network I/O. Extend bhyve to emulate the ptnet device model and interact with the netmap instance used by the hypervisor (estimated new code ~600 loc).Export a network interface to the FreeBSD guest kernel that allows ptnet to be used by the network stack, including virtio-net header support (estimated new code ~800 loc).Implement a ptnet driver for FreeBSD guests that is able to attach to netmap to support native netmap applications (estimated new code ~700 loc).Taking the above prototype as a reference, the following work is required: In this project I would like to implement ptnet for FreeBSD and bhyve, which do not currently allow TCP/IP traffic with such high performance. I have recently developed a prototype of ptnet, a new multi-ring paravirtualized device for Linux and QEMU/KVM that builds on ptnetmap to allow VMs to exchange TCP traffic at 20 Gbps, while still offering the same ptnetmap performance to native netmap applications. Moreover, ptnetmap was not able to support multi-ring netmap ports.

Unfortunately, the original ptnetmap implementation was not able to exchange packets with the guest TCP/IP stack, it only supported guest applications running directly over netmap. Netmap passhthrough (ptnetmap) has been recently introduced on Linux/FreeBSD platforms, where QEMU-KVM/bhyve hypervisors allow VMs to exchange over 20 Mpps through VALE switches. High-performance TCP/IP networking for bhyve VMs using netmap passthrough

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed